← Blog

/

Difference between AI, ML, LLM, and generative AI

High-quality human expert data. Now accessible for all on Toloka Platform.

Toloka Platform delivers high-quality training data for LLMs, RLHF, and model evaluation.

Updated March 2026

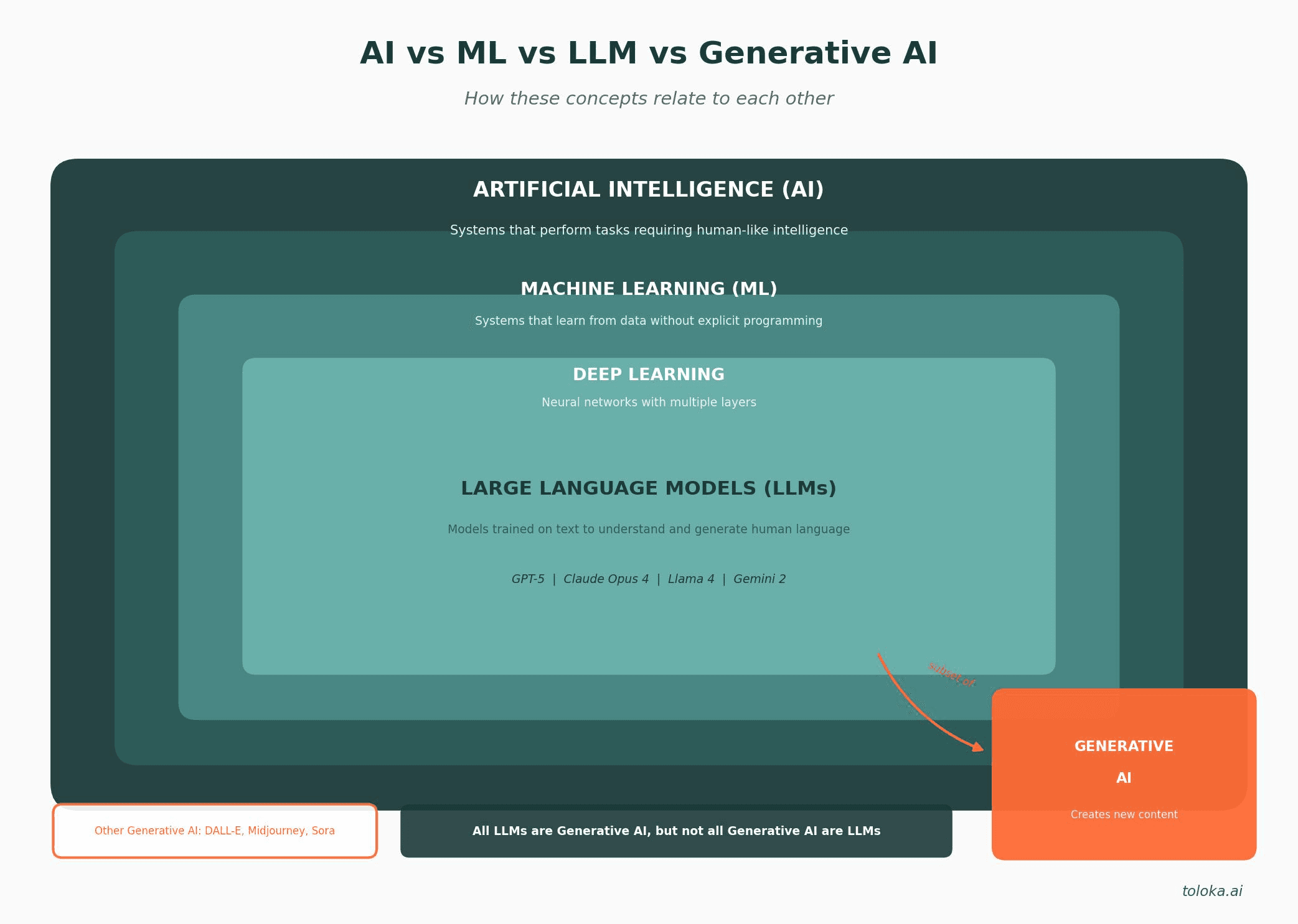

For those who are new to the field of artificial intelligence, grasping the many complex terms associated with it can prove to be quite overwhelming. Artificial Intelligence (AI), Machine Learning (ML), Large Language Models (LLMs), and Generative AI are all related concepts in the field of computer science, but there are important distinctions between them. They have significant differences in their functionality and applications. We will take a closer look at these concepts and gain a better understanding of their distinctions.

Quick definitions: AI vs ML vs LLM vs generative AI

AI (Artificial Intelligence): The broad field of computer science focused on creating systems that can perform tasks requiring human-like intelligence, including reasoning, learning, and problem-solving. ML (Machine Learning): A subset of AI where systems learn patterns from data without being explicitly programmed for each task. ML enables AI systems to improve through experience. LLM (Large Language Model): A type of deep learning model trained on massive amounts of text data to understand and generate human language. Examples include GPT-5, Claude Opus 4, and Llama 4. Generative AI: AI systems capable of creating new content such as text, images, code, audio, or video. LLMs are one category of generative AI, alongside image generators like DALL-E and Midjourney. |

How AI, ML, LLM, and generative AI relate to each other

Understanding the relationship between these concepts is easier when you visualize them as nested categories, where each builds upon the previous:

Artificial Intelligence (AI) └── Machine Learning (ML) └── Deep Learning └── Large Language Models (LLMs) └── [Subset of Generative AI] |

In this hierarchy, AI is the broadest category. Machine learning is a specific approach within AI. Deep learning is a subset of machine learning using neural networks. LLMs are a specialized type of deep learning model focused on language. And generative AI is a capability that spans multiple AI approaches, with LLMs being one of the most prominent examples.

Artificial Intelligence

AI belongs to the field of computer science that deals with the development of computer systems that can perform tasks that typically require human intelligence, such as speech recognition, natural language processing (NLP), text generation and translation, video, sound, and image generation, decision making, planning, and more.

AI, in general, refers to the development of intelligent systems that can mimic human behavior and decision-making processes. It encompasses techniques and approaches enabling machines to perform tasks, analyze visual and textual data, and respond or adapt to their environment. One of the key advantages of artificial intelligence is its ability to process large amounts of data and find patterns in it. AI tools are designed to make decisions or take actions based on that knowledge.

AI has applications in many fields including marketing, medicine, finance, science, education, industry, and many others. For example, in marketing it is applied to generate marketing materials, in medicine it is utilized to diagnose diseases, and in finance, it is used to analyze financial markets and make investment decisions.

Types of AI: From narrow to general intelligence

There are a handful of types and classifications of AI, including one based on the so-called AI evolution. According to this hypothetical evolution classification, all forms of AI existing now are considered narrow AI (also called weak AI) because they are limited to a specific or narrow area of cognition. Narrow AI lacks human consciousness, although it can simulate it in some situations. Current LLMs like GPT-5 and Claude Opus 4, despite their impressive capabilities, are still considered narrow AI because they excel at language tasks but cannot perform arbitrary human activities.

The next stage of AI development may be a conceptual (so far) form called artificial general intelligence (AGI), endowed with human-level reasoning across all cognitive domains. AGI would be capable of performing human tasks, constructing mental abilities, reasoning, and learning from experience. It would no longer merely "mimic" human behavior but would practically become a real thinking being. As of 2026, AGI remains a goal rather than reality, though recent advances in reasoning models like OpenAI's o3 and GPT-5 represent significant steps toward more general capabilities.

The theoretical peak of AI development may result in Super AI (ASI), which would outperform humans in all areas. Artificial Intelligence can also be categorized into discriminative and generative.

Discriminative and generative AI

Discriminative and generative AI are two different approaches to building AI systems. Discriminative AI focuses on learning the boundaries that separate different classes or categories in the training data. These models do not aim to generate new samples, but rather to classify or label input data based on what class it belongs to. Discriminative models are trained to identify the patterns and features that are specific to each class and make predictions based on those patterns.

Discriminative models are often used for tasks like classification or regression, sentiment analysis, and object detection. Examples of discriminative AI include algorithms like logistic regression, decision trees, random forests and so on.

In contrast to discriminative AI, Generative AI focuses on building models that can generate new data similar to the training data it has seen. Generative models learn the underlying probability distribution of the training data and can then generate new samples from this learned distribution.

Generative AI is capable of image synthesis, text generation, code creation, music composition, and video production. Such systems typically involve deep learning and neural networks to learn patterns and relationships in the training data. They use that knowledge to create new content. Examples of generative AI models include Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs), transformer and diffusion models, and many more.

Generative AI is inconceivable without foundation models, that play a significant role in advancing it. They are large-scale algorithms that serve as the backbone of AI systems. By leveraging the learned knowledge of foundation models, generative AI systems can generate high-quality and contextually relevant content. These models have seen tremendous progress, allowing them to generate human-like text, answer questions, write essays, create stories, produce working code, and much more.

Through the utilization of a foundational model, we have the capacity to craft more specialized and advanced models that are specifically designed for particular domains or use cases. For instance, generative AI can utilize foundation models as a core for creating large language models. By leveraging the knowledge learned from training on vast amounts of text data, generative AI can generate coherent and contextually relevant text, often resembling human-generated content.

Machine learning vs. AI

Machine Learning is a specific subset or application of AI that focuses on providing systems the ability to learn and improve from experience without being explicitly programmed. ML is a critical component of many AI systems. ML algorithms are used to train AI models by providing them with datasets containing labeled examples or historical data. The model then learns the underlying patterns in the training data, enabling it to make accurate predictions or decisions on new, unseen data. By continuously feeding data to ML models, they can adapt and improve their performance over time.

AI encompasses the broader concept of developing intelligent machines, while ML focuses on training systems to learn and make predictions from data. AI aims to replicate human-like behavior, while ML enables machines to automatically learn patterns from data.

A machine learning model in AI is a mathematical representation or algorithm that is trained on a dataset to make predictions or take actions without being explicitly programmed. It is a fundamental component of AI systems as it enables computers to learn from data and improve performance over time.

Where do LLMs fit in machine learning?

LLMs are a specific application of deep learning, which is itself a subset of machine learning. The relationship can be understood as: Machine Learning → Deep Learning → Transformer Neural Networks → Large Language Models.

While traditional machine learning models might use thousands or millions of parameters to recognize patterns, LLMs use billions or even trillions of parameters. This massive scale, combined with the transformer architecture introduced in 2017, allows LLMs to capture complex language patterns that smaller models cannot.

Key differences between traditional ML and LLMs:

Aspect | Traditional ML | LLMs |

Parameters | Thousands to millions | Billions to trillions |

Training data | Task-specific datasets | Massive text corpora (internet-scale) |

Task flexibility | Single task per model | Many tasks via prompting |

Training cost | Hours to days | Weeks to months, millions of dollars |

Example | Spam classifier, recommendation engine | GPT-5, Claude Opus 4, Llama 4 |

Generative AI vs. large language models

Generative AI is a broad concept encompassing various forms of content generation, while LLM is a specific application of generative AI. Large language models serve as foundation models, providing a basis for a wide range of natural language processing (NLP) tasks. Generative AI can encompass a range of tasks beyond language generation, including image and video generation, music composition, and more. Large language models, as one specific application of generative AI, are specifically designed for tasks revolving around natural language generation and comprehension.

Large language models operate by using extensive datasets to learn patterns and relationships between words and phrases. They have been trained on vast amounts of text data to learn the statistical patterns, grammar, and semantics of human language. This vast amount of text may be taken from the Internet, books, and other sources to develop a deep understanding of human language.

An LLM can take a given input (a sentence or a prompt) and generate a response: coherent and contextually relevant sentences or even paragraphs based on a given prompt or input. The model uses various techniques, including attention mechanisms, transformers, and neural networks, to process the input and generate an output that aims to be coherent and contextually appropriate. For a deeper understanding of the technical foundations, see our guide on how LLMs work.

Both generative AI and large language models involve the use of deep learning and neural networks. While generative AI aims to create original content across various domains, large language models specifically concentrate on language-based tasks and excel in understanding and generating human-like text.

Large language model applications

Large language models can perform a wide range of language tasks, including answering questions, writing articles, translating languages, and creating conversational agents, making them extremely valuable tools for various industries and applications.

Code generation and development: By providing prompt or specific instructions, developers can utilize these large language models as code generation tools to write code snippets, functions, or even entire programs. This can be useful for automating repetitive tasks, prototyping, or exploring new ideas quickly. Code generation with large language models has the potential to greatly assist developers, saving time and effort in generating boilerplate code, exploring new techniques, or assisting with knowledge transfer.

Customer service and chatbots: Companies are employing large language models to develop intelligent chatbots. They can enhance customer service by offering quick and accurate responses, improving customer satisfaction, and reducing human workload.

Content creation and automation: Large language models can help businesses automate content creation processes, as well as save time and resources. Additionally, language models assist in content arrangement by analyzing and summarizing large volumes of information from various sources.

Data analysis and insights: Businesses process and analyze unstructured text data more effectively with the help of large language models. They can fulfill tasks like text classification, information extraction, sentiment analysis, and more. All of this plays a big role in understanding customer behavior and predicting market trends.

Agentic AI and autonomous systems: In 2025-2026, LLMs have evolved beyond simple text generation to power AI agents that can plan, reason, and take actions. These agentic systems can browse the web, execute code, manage files, and complete multi-step tasks autonomously. The agentic AI market has grown rapidly, representing one of the fastest-growing applications of LLM technology.

Popular LLMs in 2026

The LLM landscape continues to evolve rapidly. Here are the most influential large language models shaping the field today:

GPT-5 and GPT-5.2 (OpenAI)

OpenAI's GPT-5 series represents the current frontier of their capabilities. GPT-5.2 builds on the foundations of GPT-4 and the o-series reasoning models, combining multimodal understanding with advanced reasoning capabilities. The model demonstrates significant improvements in complex problem-solving, coding, and agentic tasks. OpenAI continues to refine the balance between capability and safety in this latest generation.

Claude Opus 4.6 and Sonnet 4.6 (Anthropic)

Anthropic's Claude 4.5 family represents the latest in their Constitutional AI approach. Claude Opus 4.5 is the most capable model, excelling at complex reasoning, coding, and nuanced analysis. Claude Sonnet 4.5 offers an excellent balance of capability and speed for everyday tasks. The Claude models are known for their extended context windows, strong safety properties, and exceptional performance on coding and analysis tasks.

Gemini 2.0 and 2.5 (Google DeepMind)

Google's Gemini models are natively multimodal, trained from the ground up on text, images, audio, and video. Gemini 2.5 continues to push the boundaries of context length and multimodal understanding. The models feature enhanced agentic capabilities and improved reasoning, with deep integration into Google's ecosystem of products and services.

Llama 4 (Meta)

Meta's Llama 4 continues the tradition of leading open-source LLMs. Building on the success of Llama 3, the latest generation offers improved capabilities across reasoning, coding, and multilingual tasks. The open-weights release enables organizations to deploy capable models on their own infrastructure, addressing data privacy and sovereignty concerns while fostering a vibrant ecosystem of fine-tuned variants.

DeepSeek R1 and V3 (DeepSeek)

DeepSeek made headlines with R1, an open-source reasoning model that demonstrated frontier capabilities could be achieved at a fraction of competitors' training costs. DeepSeek V3 continues this efficiency-focused approach, offering competitive performance with significantly lower resource requirements. Their work has challenged assumptions about the resources required to build capable AI systems.

Mistral Large 2 (Mistral AI)

The French startup Mistral AI has established itself as a major player with efficient models that punch above their weight. Mistral Large 2 competes with much larger models while maintaining European data sovereignty. Their Mixture-of-Experts architecture enables efficient inference without sacrificing capability.

Earlier influential models

While newer models dominate today, several earlier LLMs remain historically significant. BERT (Google, 2018) pioneered bidirectional training and remains widely used for classification tasks. GPT-3 (OpenAI, 2020) demonstrated that scale unlocks emergent capabilities. GPT-4 (2023) set new standards for multimodal understanding. For more context on how these models evolved, see our article on the history of LLMs.

The evolution of LLMs: 2025-2026 breakthroughs

The AI landscape has transformed significantly over the past two years. Several key developments have reshaped what LLMs can do and how they're built:

Reasoning at scale: The reasoning paradigm introduced by OpenAI's o1 and o3 has become standard across frontier models. Models now routinely "think" through problems using chain-of-thought processes, dramatically improving performance on mathematics, coding, and complex analysis. GPT-5 and Claude Opus 4.5 integrate these capabilities natively.

Multimodal as default: Processing text, images, audio, and video in a single model is now standard rather than exceptional. Modern LLMs can engage in real-time voice conversations, analyze complex visual scenes, and generate content across modalities. This moves LLMs from text-only tools to general-purpose AI assistants.

Agentic capabilities: LLMs evolved from passive responders to active agents. They can now browse the web, write and execute code, manage files, operate desktop applications, and complete complex multi-step tasks autonomously. Computer-use agents have become practical tools for automating workflows.

Extended context and memory: Context windows have expanded dramatically, with some models handling millions of tokens. Combined with improved retrieval and memory systems, LLMs can now work with entire codebases, document collections, or conversation histories spanning months.

Open-source maturity: Meta's Llama series, Mistral's models, and DeepSeek's releases have created a mature open-source ecosystem. Organizations can now run highly capable LLMs on their own infrastructure, with performance approaching proprietary models.

Efficiency breakthroughs: DeepSeek's demonstration that frontier AI doesn't require frontier budgets has reshaped the competitive landscape. Improved training techniques, better architectures, and more efficient inference have made powerful AI more accessible to a wider range of organizations.

Frequently asked questions

What is an LLM in AI? An LLM (Large Language Model) is a type of artificial intelligence model trained on massive amounts of text data to understand and generate human language. LLMs use deep learning techniques, specifically transformer neural networks, to learn patterns in language. They can answer questions, write content, translate languages, summarize documents, and perform many other language tasks. Popular examples include GPT-5, Claude Opus 4, Llama 4, and Gemini 2. |

What does LLM stand for? LLM stands for Large Language Model. The "large" refers to the billions or trillions of parameters these models contain, and the massive datasets (often spanning much of the internet) used to train them. "Language model" indicates their primary function: predicting and generating human language based on statistical patterns learned during training. |

Is an LLM the same as generative AI? No, but they're closely related. Generative AI is the broader category that includes any AI system capable of creating new content, including text, images, audio, video, and code. LLMs are one specific type of generative AI focused on language. Other generative AI systems include image generators (DALL-E, Midjourney, Stable Diffusion), music generators, and video synthesis models. All LLMs are generative AI, but not all generative AI systems are LLMs. |

Are LLMs a type of machine learning? Yes, LLMs are a specialized form of machine learning. More specifically, LLMs are built using deep learning, which is a subset of machine learning that uses neural networks with many layers. The hierarchy is: Machine Learning → Deep Learning → Transformer Models → Large Language Models. LLMs learn from data (text) to make predictions (generate language), which is the core definition of machine learning. |

What is the difference between AI, ML, and LLM? These terms represent nested categories. AI (Artificial Intelligence) is the broadest term, referring to any system designed to perform tasks requiring human-like intelligence. ML (Machine Learning) is a subset of AI where systems learn from data rather than being explicitly programmed. LLM (Large Language Model) is a specific type of ML model trained on text data to understand and generate language. Think of it as: AI contains ML, and ML contains LLMs (along with many other model types). |

What are the different types of AI? AI can be categorized in several ways. By capability: Narrow AI (performs specific tasks, like all current AI), General AI/AGI (human-level reasoning across domains, not yet achieved), and Super AI (surpasses human intelligence, theoretical). By function: Discriminative AI (classifies and labels data) and Generative AI (creates new content). By approach: machine learning, deep learning, reinforcement learning, symbolic AI, and hybrid systems. |

Conclusion

Artificial Intelligence (AI), Machine Learning (ML), Large Language Models (LLMs), and Generative AI are all related concepts in the field of computer science, but there are important distinctions between them. Understanding the differences between these terms is crucial as they represent different vital aspects and features in AI.

In summary, AI is a broad field covering the development of systems that simulate intelligent behavior. It encompasses various techniques and approaches, while machine learning is a subfield of AI that focuses on designing algorithms that enable systems to learn from data. Large language models are a specific type of ML model trained on text data to generate human-like text, and generative AI refers to the broader concept of AI systems capable of generating various types of content.

ML, LLMs, Generative AI: these are just a few of the many terms used in AI. Gaining insight into these distinctions is essential for comprehending the unique characteristics and uses of AI, ML, LLMs, and Generative AI within the constantly changing world of technology. As the AI landscape continues to evolve, with breakthroughs like reasoning models, multimodal systems, and agentic AI, new concepts will inevitably appear and the terminology we employ to characterize these systems will transform in the future.

Related reading:

Building AI systems that need high-quality training data?

The Toloka Platform delivers high-quality human expert data for LLM training, RLHF, and model evaluation. Access specialists across 90+ fields with AI-assisted project setup and built-in quality assurance. Get started with the data your models need.

Subscribe to Toloka news

Case studies, product news, and other articles straight to your inbox.